Written By Divya

Published By: Divya | Published: May 13, 2026, 04:32 PM (IST)

Suicide after using ChatGPT? That’s something we haven’t heard for the first time. In the last couple of years, there have been multiple cases where victims were found to be using AI chatbots before harming themselves or others. And now, there is another case which surfaced in the US. Also Read: Threads tests deeper Meta AI integration with in-app replies to take on X’s Grok

A Texas couple are blaming OpenAI after their 19-year-old son, Sam Nelson, died of a drug overdose in 2025. According to a report by CBS News, the parents allege that ChatGPT provided dangerous guidance related to drug use despite not being qualified to offer medical advice. Here’s what has happened. Also Read: Meet Googlebook: Google’s new laptop that blends Android and Gemini AI features

Victim’s mother, Leila Turner-Scott, said she knew that her son was using ChatGPT for productivity-related tasks and homework. However, they later found out that he was using the AI chatbot to discuss drugs and substance use. Also Read: Meta Layoff 2026: Did Meta CEO Mark Zuckerberg confirm 8,000 job cuts?

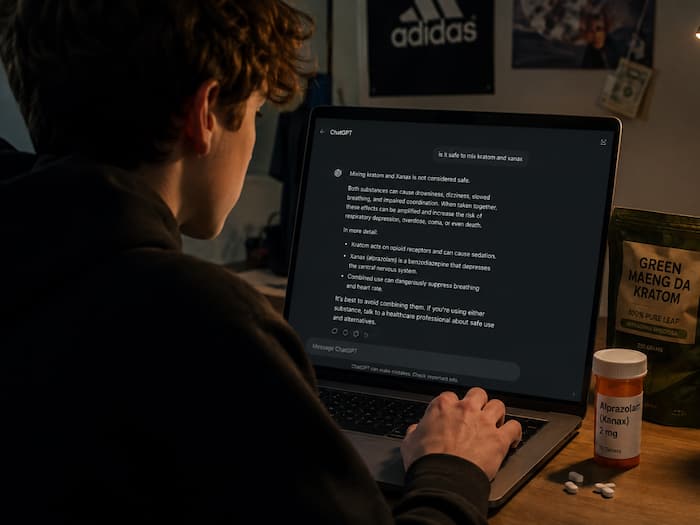

According to the lawsuit, ChatGPT allegedly advised Sam that combining kratom (to treat anxiety disorders and panic disorders) with Xanax (to treat anxiety disorders and panic disorders) was safe. The family claims those conversations eventually led to the fatal overdose.

The lawsuit further alleges that ChatGPT continued engaging in harmful discussions instead of stopping the conversation or directing the user away from dangerous behaviour. The family is now seeking to hold OpenAI accountable, arguing that stronger safeguards could have prevented the situation.

Sam’s stepfather, Angus Scott, reportedly argued that the chatbot crossed into territory that should belong only to licensed professionals.

The family also claims that newer versions of ChatGPT became more permissive over time. Earlier AI systems were more likely to discourage drug use directly, while updated versions allegedly started offering more explanatory and conversational responses around substance intake.

CBSNews reported that OpenAI called this incident heartbreaking, while claiming that ChatGPT used by Sam at that time has been updated. According to OpenAI, the chatbot today includes safeguards designed to detect distress, handle harmful requests more carefully, and encourage users to seek real-world help.

OpenAI also suggested that ChatGPT had encouraged Sam to contact professionals and emergency resources during some interactions.

This is not the first time a case of ChatGPT or any other AI bot has come into the limelight behind a suicide case or even an attempt to murder! Over the last year, several lawsuits and reports have raised concerns about how vulnerable users are relying too heavily on conversational AI bots.

Some cases involve self-harm, while others focus on violent behaviour or emotional dependency.

But the important question here is how much responsibility AI companies should carry when chatbot conversations influence real-world decisions. While AI companies continue arguing that chatbots are tools, not therapists, doctors, or human advisors. And it just seems to be a never-ending cycle… for now!