Written By Shubham Arora

Edited By: Shubham Arora | Published By: Shubham Arora | Published: May 14, 2026, 08:30 PM (IST)

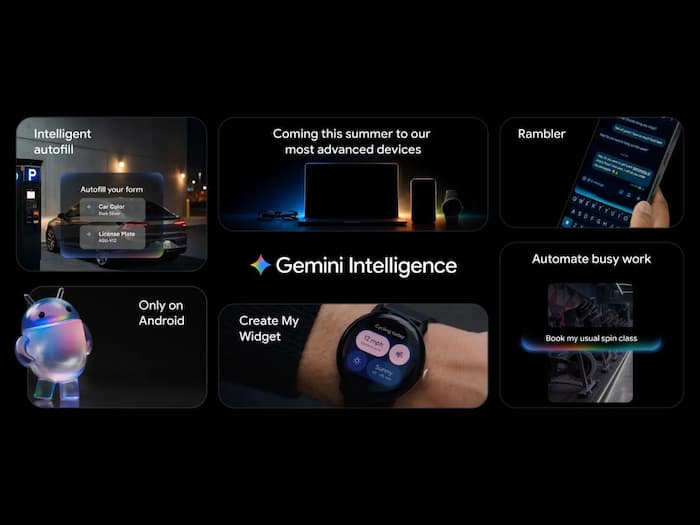

Google is pushing deeper into AI with something it calls Gemini Intelligence, and this time the company is moving beyond the usual chatbot experience. Instead of just answering questions, Gemini is being positioned as an AI system that can actually handle tasks across Android devices. Also Read: Elon Musk vs Sam Altman battle keeps getting bigger after latest OpenAI court disclosures

The announcement was made during The Android Show 2026 ahead of Google I/O, where the company previewed how AI agents could become part of daily smartphone use. The rollout will begin with newer Samsung Galaxy and Pixel devices before expanding to watches, cars, laptops, and other Android products later this year. Also Read: Teen dies from drug overdose after using ChatGPT; OpenAI faces a lawsuit now

The biggest shift here is automation. Google says Gemini Intelligence will be able to move between apps and complete multi-step actions on behalf of users. Also Read: Step-by-step guide to installing iOS 26.5 and turning on encrypted RCS messaging

For example, users could ask Gemini to find a syllabus in Gmail and then add required books to a shopping cart. Another example shown by Google involved turning a grocery list into an online order without manually switching between apps.

The company is also adding visual understanding. Instead of typing long prompts, users can hold the power button while viewing content on-screen and ask Gemini to perform actions based on what it sees.

Google says users will still remain in control, with Gemini asking for confirmation before completing transactions.

Google is also bringing Gemini into Google Chrome on Android. The browser will be able to summarise web pages, compare information across tabs, and even help with bookings or reservations.

Another change is coming to Autofill. Instead of only filling basic details like names or addresses, Gemini Intelligence will pull relevant information from connected apps to complete forms more intelligently.

There is also a new feature called Rambler, which turns natural speech into cleaner text by removing pauses and filler words. Google says it supports multiple languages, including Hindi.

The company is additionally experimenting with AI-generated widgets. Users can describe what they want, and Gemini can create custom widgets around that request.

While Google is moving aggressively with Gemini, Apple is also expected to reveal a revamped version of Apple Intelligence at WWDC 2026 next month. Interestingly, reports suggest Gemini itself may power some Siri and Apple Intelligence features as part of Google and Apple’s ongoing AI partnership.

That also puts pressure on Apple. Unlike Android, where Google controls both the operating system and many core services, Apple is still trying to expand AI deeper into its ecosystem without disrupting the iPhone’s app-focused structure.

At the same time, OpenAI is reportedly exploring AI-first hardware as well. Reports around Sam Altman’s AI device project suggest the company wants AI agents to become central to how users interact with future devices.

That means the competition is no longer just about chatbots. Companies are now trying to build AI systems that can understand context, move across apps, and complete actions with minimal input from users. And with Android already powering most smartphones globally, Google currently has one major advantage: scale.